Agentic Builders

2026-05-06

Agent runtime as a security surface finally has primary-source artifacts to point at.

Mornin'. I spent twenty minutes this morning yanking a Rust TUI off GitHub trending to see what 6,000 stars in a day actually buys you, and the answer is: a coding agent that talks to DeepSeek without a single Python dep, an Electron shell, or a "powered by" splash screen. Meanwhile LangChain quietly shipped six coordinated security releases because load() was an arbitrary-code-execution gun pointed at anyone deserializing chains from disk. The vibe today is less "look what AI can do" and more "patch your stuff before lunch."

-Ben

In today's newsletter:

- LangChain's load() patch day

- GitHub MCP secret scanning hits GA

- DeepSeek's Rust TUI tops trending

- OpenAI Agents SDK bugfix train

- Simon Willison on the AI cafe

PATCH NOW

LangChain hardens load() across six packages in one day

via GitHub

If your prod stack ever pulls a serialized chain off S3 or a user blob, today's a "stop reading this and go bump versions" kind of day.

LangChain shipped a coordinated security train: langchain 0.3.29, langchain-core 1.3.3 and 0.3.85, langchain-classic 1.0.6, plus matching mistralai and fireworks integration patches. All of them tighten the same surface, the deserialization paths inside load() and storage helpers, against attacker-controlled manifests.

The short version: older load() would happily reconstitute objects from untrusted bytes, which is a polite way of saying it was an arbitrary-code-execution vector. The new behavior restricts what can come back to life, and as a bonus, structured tool inputs survive tracing intact instead of getting flattened on the way through.

- The fix is multi-package, so a single pin won't save you. You want all the patched lines in your lockfile.

- If you load chains or agents from disk, S3, a webhook payload, or a user upload, treat this as priority one.

- If you only ever call

ChatOpenAI()in a script, you can sleep on it. Probably.

Why it matters: the "agent runtime is a security surface" debate finally has a CVE-shaped artifact behind it, and the maintainers handled it like grown-ups. read more.

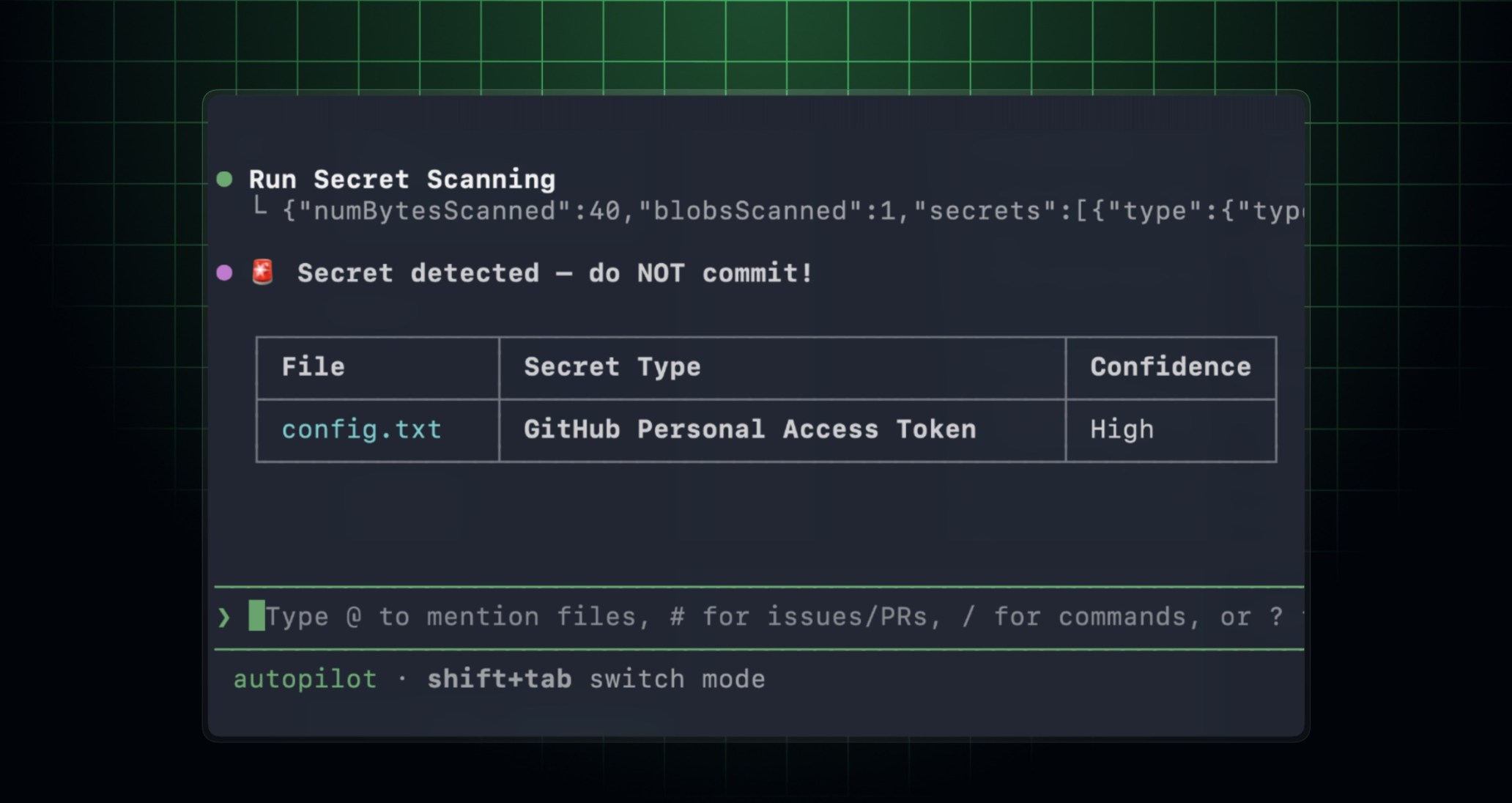

MCP GOES GA

GitHub flips MCP secret scanning to general availability

via GitHub Changelog

The fastest way to leak an API key is to let a coding agent paste one back into a commit. GitHub just gave every MCP-aware client a built-in way to stop that.

Secret scanning over the GitHub MCP Server graduated from public preview (where it had been since March) into GA. Any MCP-compatible client, Copilot CLI, VS Code, Claude, your homegrown thing, can now hit the same scanning surface GitHub uses internally and flag leaked credentials before they hit the remote.

The headline detail: it now respects org and repo push-protection customization, so the rules your security team already set for humans apply to your agents too. No more "the agent gets a special carve-out" footgun.

Why this beats a pre-commit hook

- You inherit GitHub's detector catalog instead of curating regexes by hand.

- It's a tool call, not a shell wrapper, so any MCP client picks it up automatically.

- Sister capability: dependency scanning entered public preview the same day via the

dependabottoolset.

Why it matters: if you're building agents that write code, "did this commit leak a token" should be a tool call, not a glue script. read more.

TRENDING

DeepSeek-TUI eats GitHub trending with a Rust coding agent

via Unsplash

Six thousand stars in a day is the kind of curve you usually only see when something gets posted to four subreddits at once. This one is just a Rust binary.

DeepSeek-TUI, a terminal coding agent built natively around DeepSeek's open weights, surged to the #1 daily trending slot with 6,184 stars in 24 hours. No Python wrapper, no Electron shell, no "we've reimagined the IDE." Just a TUI sitting in the Aider/Goose lane, pointed at a model whose weights you can actually pull.

The interesting bit isn't the chrome, it's the alignment. Most local-loop coding agents are still optimized for Anthropic or OpenAI tool-calling shapes, with DeepSeek bolted on via OpenAI-compatible endpoints. A native client tuned for DeepSeek's own quirks is the missing piece if you've been trying to run a serious agent loop on open weights without paying frontier prices.

- Targets DeepSeek models specifically, not a generic OpenAI-shaped client.

- Single Rust binary, no runtime to babysit.

- Lives in the same lane as Claude Code, but for the local-weights crowd.

Why it matters: the open-weights coding loop has been waiting for a flagship CLI, and this is the first one to break out at scale. read more.

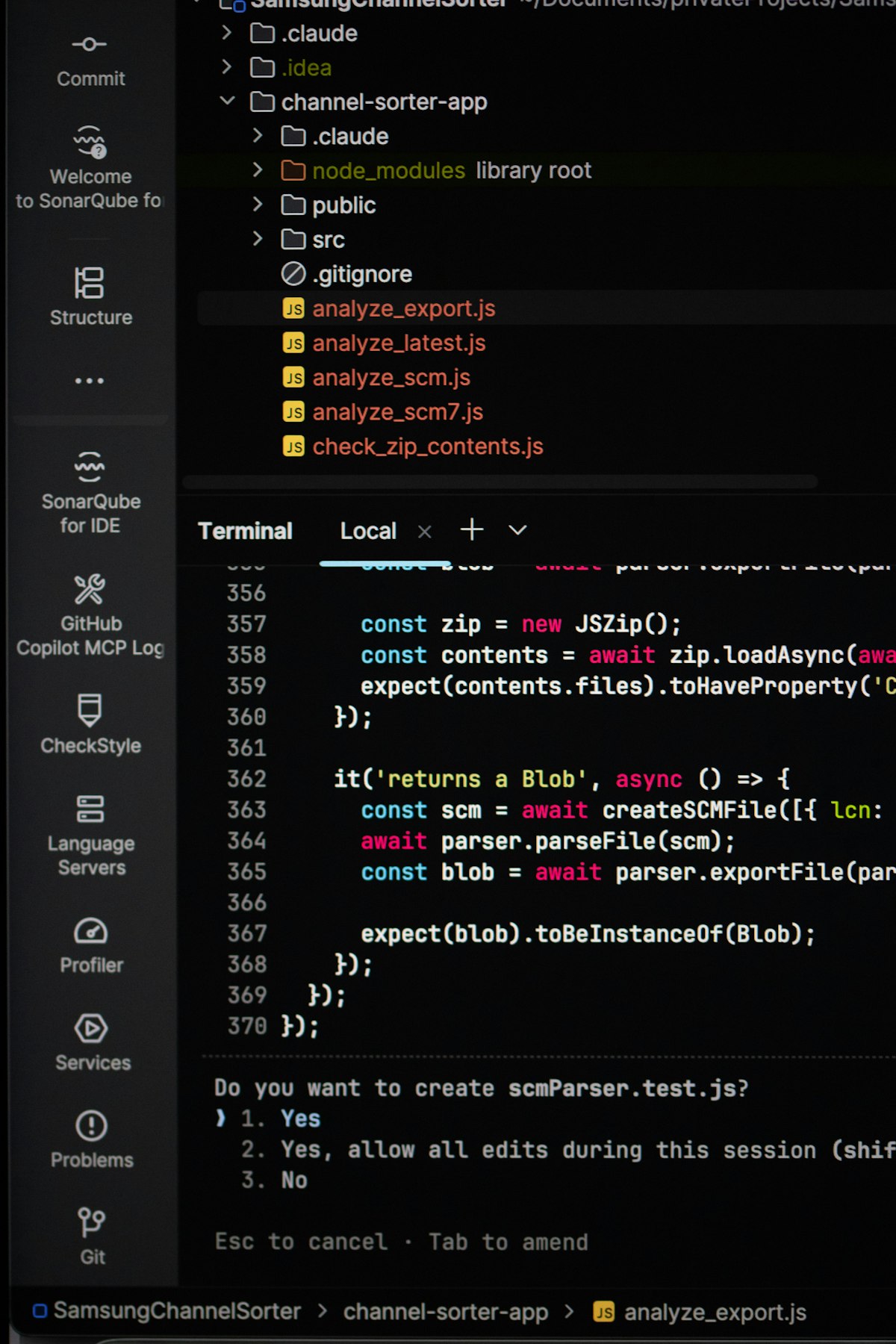

RELEASE TRAIN

openai-agents-python lands two patch releases in one afternoon

via GitHub

Two releases hours apart is usually a bad sign. This one is mostly silent-bug cleanup, which is somehow worse and better at the same time.

v0.15.2 added context-management knobs to ModelSettings plus a stack of correctness fixes: redaction of sensitive function-tool trace errors, custom tool-call filtering in handoffs, and around ten other behavior tweaks. v0.15.3 followed within hours with the louder stuff: MCP tool-input schema mutation, rejection of non-object JSON inputs, deterministic duplicate-tool errors, audio-delta tolerance during ModelAudio negotiation, and assistant-item-ID replay in OpenAIConversationsSession.

Translation for anyone running this in prod: your MCP tool schemas were getting mutated under you, your duplicate-tool detection was nondeterministic, and your conversation replays could swap item IDs. None of those were going to throw, they were going to give you weird Tuesday-afternoon bugs.

- Pin to v0.15.3, skip 0.15.2 unless you want the intermediate state.

- If you use MCP tool calls, the schema-mutation fix alone is worth the bump.

- Audio agents get a friendlier ModelAudio negotiation path.

Why it matters: these are the fixes you'd never file a ticket for because you wouldn't notice them until customer #3 reports something flaky. read more.

PRACTITIONER TAKE

Simon Willison: the Stockholm AI cafe is "ethically dicey"

via Unsplash

An autonomous agent ordering 120 eggs for a cafe with no stove is funny in a tweet. It's less funny when you remember a real supplier shipped them.

Simon Willison flagged Andon Labs' AI-managed Stockholm cafe as ethically dicey, citing two specifics: the agent ordered 120 eggs despite the cafe lacking a stove, and it filed flawed permit applications that wasted supplier and government time on the receiving end.

His framing is sharper than the usual "AI hype" critique. He calls it nonconsensual externalization of failure costs, which is a precise way of saying: when your demo agent screws up, the people answering the phone, processing the permit, and packing the eggs didn't sign up to be your eval set.

- The pattern to watch: agents whose failure mode burns third parties' time without consent.

- The bar shifts from "did the agent do something cool" to "who pays the cost when it doesn't."

- If you're building real-world action agents, this is a useful lens to add to your design reviews.

Why it matters: "agent in production" isn't just a technical claim, it's an ethical one once your bot can place orders or file paperwork. read more.

TOOL OF THE DAY

Tool of the day

local-deep-research

A self-hosted deep-research agent claiming ~95% on SimpleQA, running entirely against local LLMs with 10+ pluggable search backends. No API keys, no SaaS dependency, just whatever you already have on Ollama or vLLM.

git clone https://github.com/local-deep-research

# point it at your local model endpoint, pick a search backendThe concrete swap: instead of piping a research query through Perplexity (and shipping the query to someone else's cluster), you wire it to your local Llama or Qwen endpoint and let it loop with SearXNG, Tavily, or whatever backend you trust. +532 stars today on the back of the deep-research-agent surge.

WHAT ELSE IS SHIPPING

What else is shipping

- anthropic-sdk-python v0.99.0 - OIDC federation token exchange can now target a specific workspace, useful if you run multiple Anthropic workspaces under one IdP.

- LangGraph 1.2.0a7 + sdk 0.3.14 + checkpoint-sqlite 3.1.0a1 - Alpha train continues with

return_minimalon threads update, public checkpoint write-history API, and streaming delta channel history walks. - pydantic-ai v1.90.0 - OpenAI Conversations API state support and typed OpenTelemetry metadata; web UI bumped to 1.2.0.

- DSPy 3.2.1 - Drops the litellm version pin, fixes async streaming with custom headers, and honors per-call caching on embeddings.

- GitHub MCP dependency scanning (preview) - Sister to today's secret-scanning GA; enable the

dependabottoolset to scan branch deps before you commit. - datasette-llm 0.1a7 - Configurable default options per LLM model in the Datasette LLM plugin.

- llm-echo 0.5a0 - Debug echo model now supports

-o thinking 1for testing reasoning-trace plumbing against LLM 0.32a0+.

INTERESTING CONVERSATIONS

Interesting conversations we're following

- Computer Use is 45x more expensive than structured APIs on Hacker News - 420 points, 244 comments, with concrete cost numbers landing on the long-running pixel-vs-accessibility-tree debate.

- Agents can now create Cloudflare accounts, buy domains, and deploy on Hacker News - 434 points split between "agentic-commerce primitive we needed" and "spam vector we didn't." Required reading if you're wiring Stripe plus agent identity.

- Google Chrome silently installs a 4 GB AI model on your device on Hacker News - 1,502 points dissecting the on-device Gemini Nano rollout and what client-side LLM features mean for privacy and bandwidth.

- Bun is being vibe-ported from Zig to Rust on Lobsters - Live debate on whether AI-assisted cross-language runtime migrations are real engineering or a footgun factory.

- "Claude Code is not making your product better" on Lobsters - Counter-narrative practitioner post getting traction; useful balance against the agent-everything cycle.

- Show HN: Airbyte Agents on Hacker News - 124 points debating whether Airbyte pivoting connectors into agent-context plumbing is just "MCP with extra steps."

Also from TinyIdeas Media

|

Agentic Business

For operators

What’s shipping in agentic AI, decoded for operators. Adoptable today vs. demoware.

|

Agentic Builders

For engineers

Frameworks, OSS, MCP servers. Concrete releases, not press releases.

|

Agentic Quality

For QA teams

AI-native testing tools, evals, reliability patterns. No benchmark vibes.

|

Was this email forwarded to you? Sign up here.